There’s a statistic that’s been sitting with me for a while:

84% of designers use AI during exploration, but only 39% use it during delivery.

That gap, nearly half of us dropping AI at the moment the work gets real, is what this project is about.

I’ve been running an RSM (Rapid Speed Month) project at Automattic, looking at where AI actually fits in how designers work. Not the polished version of the workflow, but the messy lived one: the handoffs, the lost context, the decisions made in the wrong thread at the wrong time.

Where the idea came from

The assumption in most AI-in-design conversations is that exploration is the hard part, ideation, variations, visual generation. But in our experience, exploration is actually where designers feel most comfortable experimenting with AI. What’s harder, and where work consistently falls through the cracks, is everything inbetween: feedback that lives in five different tools, decisions that never get documented, projects that start from scratch because nothing from previous attempts is documented or findable.

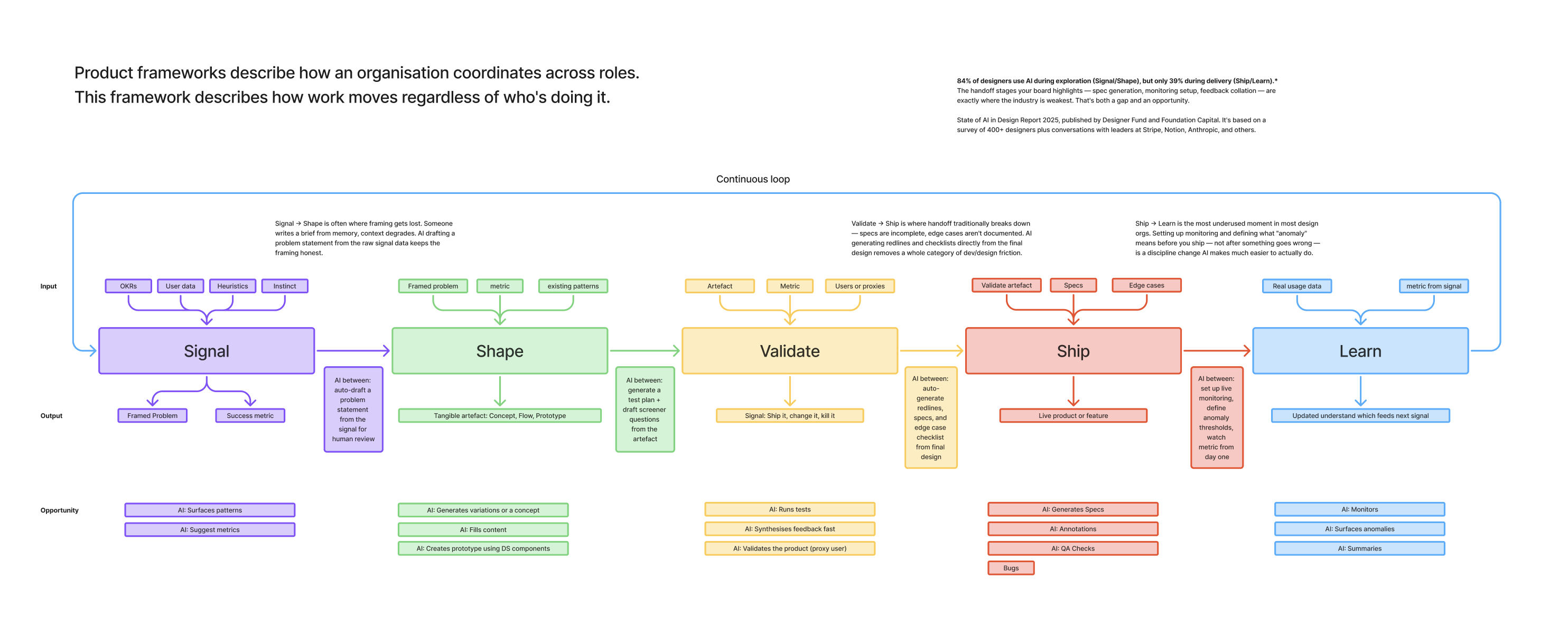

We started mapping the design process not as a linear sequence of roles, but as a series of stages that work moves through regardless of who’s doing it: Signal, Shape, Validate, Ship, Learn. At each stage, we asked a simple question: where could AI reduce friction, and where does human judgment need to stay central?

The answer wasn’t surprising, but naming it clearly was useful. AI can do a lot of the mechanical work, aggregating, connecting and surfacing. What it can’t do is make the call, and that distinction became the design principle.

What we found

The first is at Validate: the feedback management problem. A designer working on a Figma prototype, a staging URL, or a code PR is getting feedback in completely different places depending on where the artifact lives. Slack threads, Linear comments, GitHub review comments, Figma comments (if there’s a Figma frame at all), meeting notes. None of these talk to each other, and the result is that decisions get made implicitly, in fragments, and nobody has a clear record of what was agreed or why.

The second is at Signal: the kickoff problem. Every project effectively starts cold, a designer rebuilding context from scratch, re-collecting references, re-establishing what we already know about the surface area. The lessons from the last shipped project don’t automatically travel to the next one. This is the other end of the same muscle: Learn doesn’t feed back into Signal the way it should.

What we’re building

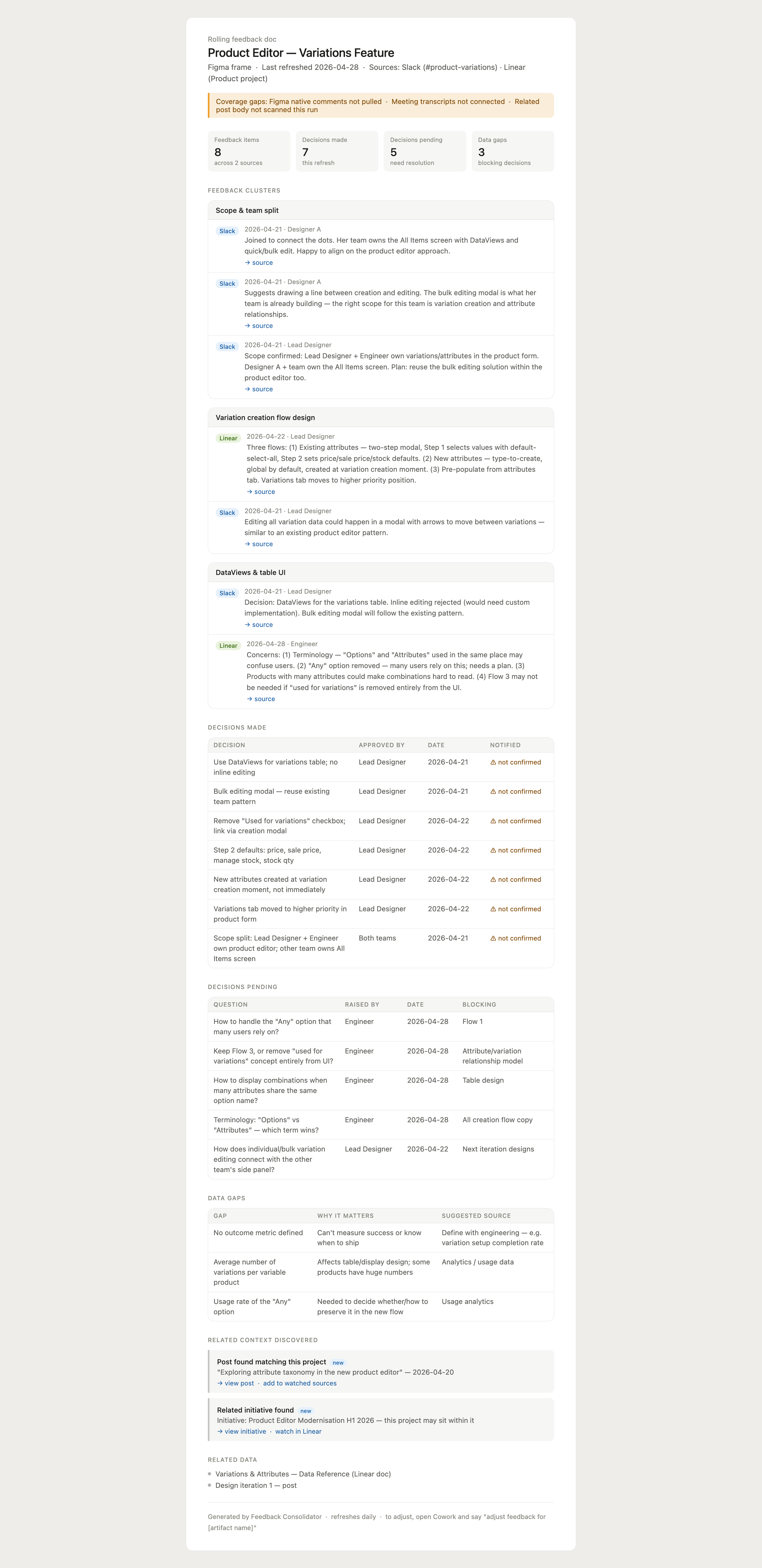

The first tool we’ve built addresses the Validate gap: a Feedback Consolidator. The idea is straightforward: a designer points it at whatever the artifact actually is (a staging URL, a Figma frame, a PR, a Figma Make prototype), and it watches the places where feedback is actually happening. Slack channels, Linear comments, GitHub PR reviews, Figma comments, Fireflies and Granola meeting transcripts. It maintains a single rolling document: feedback clustered by theme, decisions made with provenance, decisions still pending, and data gaps flagged where signal is missing.

An example of the report you receive.

It matters not just what was decided, but who said it, where, and whether it actually reached the people who needed to hear it.

We’ve also just added something that came out of testing: the tool now proactively searches across the broader network for mentions of the project, internal posts, related strategic initiatives, even if nobody thought to link them. The most useful context is often the stuff you didn’t know existed.

Where it goes next

The Feedback Consolidator is the first of what I think will be three connected tools. The second is a Decision Gate Pack, a brief that fires when a project is approaching a kill/continue/refine moment, pulling together what was learned, the user signal, the cost, and a recommendation. The third is Ship Track, something that triggers at the start of a project and uses things we have learned from retros and all of the other skills during that project timeline.

The thing that unifies all three is the same conviction: the value of AI in design work isn’t in generating more, it’s in losing less. Less context, fewer decisions made in the dark, less time spent rebuilding what already exists somewhere.

The Feedback Consolidator is available to test now. If you’re a designer, engineer, or PM who recognises any of this, I’d love to hear what you find.

Leave a comment